I am a big fan and admirer of James Bach.

I have been following his videos and talks for some time now.

Here is a YouTube video of him giving a presentation on Risk based Testing. He uses the example of a Self Driving Car and invites/challenges the audience into real-time risk analysis.

If you are interested, please watch the below video:

Risk-Based Testing with James Bach – https://www.youtube.com/watch?v=Sz-WiCV2eh4

It is a one and a half hour long video. So if you do not want to sit through the entire video, here is a summary of the key points from his talk. It is a fascinating video and I hope you will enjoiy it as much as I did.

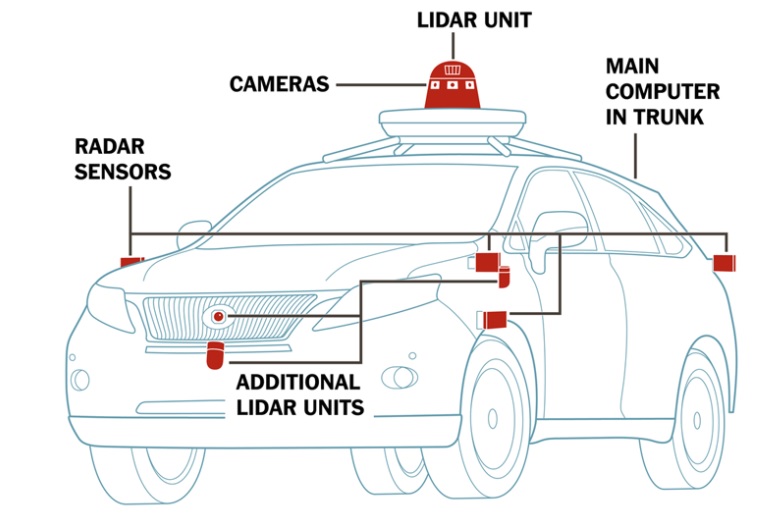

Lets take an example of a project that is developing a Self Driving Car.

You have been brought in as a Test Manager to lead a team of testers to test the application that drives this car.

What is the first thing you should do?

Allocate few testers into the project and ask them to list down all the scenarios that they can think of. It will be helpful if you have documented requirements.

For listing down all scenarios, James Bach recommends using techniques such as open coding and backward coding.

Open coding is where you think of the Self Driving Cars and what it does and then create labels for those actions. So you could think of labels such as:

- Performance – scenarios that test the performance of the car

- User accessibility – scenarios that test how the user interacts with the car

- Ethical choices – scenarios that test how the car behaves when faced with ethical choices such as which road to choose at a fork if GPS provides no direction or GPS connection is lost

- Boundaries – scenarios that test the boundaries of the car operations

- Reaction – scenarios that test how the car behaves for examples when someone suddenly appears in front of the car

- Operational – scenarios that test how the car behaves under normal conditions

- etc.

All scenarios stem from these labels.

Backward coding on the other hand means that you have listed down all scenarios and then you create labels from them.

Open coding is preferable to backward coding.

Once your team has listed down all the scenarios, call a group session and discuss all the scenarios. This will allow the team members involved to bounce scenarios off each other and may highlight gaps that they have not thought of. This will also allow further scenarios to be added or removed, purely based on their merit and group agreement.

Some examples of scenarios that would not have registered with the testers in the initial stages are as below:

- What if dust or tape covers the sensors. How would the car behave?

- What if a person wishing to steal the car stands in front of the car. Does the car stop due to detecting object infront?

- If there is roadwork happening and the car needs to slow down, how would the car behave?

- What is traffic light is flashing yellow. How would the car behave?

- Since the car drives based on GPS data, if there is change in speed limit on the road, how would the car behave?

- On a winding roads where you cannot see beyond the curve, how would the car behave?

- When someone suddenly appears in front of the car, how would the car react?

So there is tremendous benefit in bouncing off ideas across team members.

Then

Create a risk catalogue for the project (in this case for the technology that drives Self Driving cars). NOTE here by technology it means applications that are run using GPS and sensors.

The purpose of the risk catalogue is to list down all types of defects you expect to find in the product for that specific technology. As a Test Manager, it is quite important that you create the risk catalogue so that when the full scope of testing is locked down, areas that have potential to introduce defects are not overlooked and are in the testing scope.

It is IMPORTANT that as a Test Manager, you build risk catalogues across different technologies over the years, so that next time you take on a project, you can refer back to your existing risk catalogue for that technology and open coding labels to quickly come up with a testing scope.

Now that you have created the risk catalogue and all scenarios (through open coding), it is time to perform the final risk analysis. James Bach recommends the use of below methods.

- Visualization – Visualise yourself in the Self Driving Car and then think of the things that can happen. Use that knowledge to find gaps in your testing scope.

- Linguistic risk analysis – Look at the labels defined in open coding and think of any other gaps within the testing scope for that category.

- Geometric risk analysis – Look at the Self Driving cars from the perspective of mathematics. For example, what happens if the speed of the car is increased to 100km/h in a 60km/h section of the road, what happens if two sensors pick up the same data etc.?

- Surface Integrity analysis – Look at scenarios where the surface area of the car is impacted. For example, what happens when the car temperature or speed changes dramatically or due to dust, car door closes but the onboard computer detects that the door is still open?

- Opinion Polling – Finally, run another round of group session to discuss the current scope among the team members. If no other scenarios are identified, then your testing scope is complete. It is time to write test scripts and start testing.

Once you complete this exercise, you cannot be faulted for not following a scientific approach to testing.

Yes, bugs may still slip through to production. But they would not be sev-1 (Critical) or sev-2 (Major) defects for sure.